last-line.dev /// michel-marie maudet · linagora

I wrote my last

line of code

in December 2025_

How I became a developer again while remaining CEO of a 200-person company.

A personal feedback. No filter.

Directeur Général · LINAGORA · Open Source AI

git log --author=mmaudet --stat

One year. One inflection point. No retouching.

I run a 200-person company. No official dev time allocated.

Yet here is what happened after November 2025.

git tag -a v0.0 -m "before"

November 2025. Claude Code.

Something unlocks.

$ claude "build me an OVH cost dashboard"

● Reading project structure...

● Writing src/components/CostChart.tsx

● Running tests... ✓ 12 passed

● Committing: feat(dashboard): add cost breakdown view

An AI that reads your code, understands your architecture, executes, tests and commits with full memory of where it is.

Vision capabilities too: screenshot your UI and Claude sees and diagnoses the visual bug directly.

ls ~/projects/ // three intentions that became code

Three projects. One proof.

ovh-cost-manager

React · Express · SQLite

An internal dashboard that was missing from our stack. I described what I needed, Claude built it in hours: monthly billing breakdown, service comparisons, trend charts, cost forecasting. In production the next day, open source the day after.

AGPL-3.0

⎋ github.com/mmaudet/ovh-cost-manager

browserlet

Chrome Extension · BSL/YAML · TypeScript

Semantic web automation with a key architectural choice: AI generates the automation script once using semantic selectors and ARIA roles, then execution is fully deterministic with no AI at runtime. A credential vault with AES-256-GCM encryption. Zero-trust in the browser.

Open Source

⎋ github.com/mmaudet/browserlet

visio-mobile

Rust · iOS + Android + Desktop

Native mobile app for La Suite Numérique Meet. Built in Rust, using the LiveKit Rust SDK for real-time video. Adaptive UI that switches layout based on mobility context: full interface when stationary, compact one-hand layout when walking, ultra-minimal with giant mute button when driving. Local Whisper transcription planned.

AGPL-3.0

⎋ github.com/mmaudet/visio-mobile

3× up to 50×!

faster delivery

3 months → 3 days

0

lines written by hand

since December 2025

1 month only

advanced MVP · 3 platforms · 2 native mobile

git log // evolution of AI-assisted development

Four phases. Most stop at 2.

Phase 1

Editor generation

Copilot · Cursor

Phase 2

Vibe coding

Chat-based

Phase 3

Coding agent

Claude Code

Phase 4 ★

Context engineering

& meta-prompting

↑ click a phase to explore ↑

cat CLAUDE.md

The real skill: context, market fit & tests. Not code.

CLAUDE.md : what it contains

Architecture decisions (locked, not to be questioned)

Coding conventions enforced on every file

What NOT to do : explicit prohibitions

Tech stack rationale and version constraints

PR checklist and test expectations

Team vocabulary and domain concepts

Meta-prompting principles

Instruct HOW to work, not just WHAT to do

PRD (Product Requirements Doc) as structured agent input

Persistent project memory across sessions

Explicit error taxonomy defined upfront

Roles and responsibilities stated clearly

The differentiating skill is no longer writing code.

It is writing context, the market fit and the tests. That is already what good tech leads do.

cat question.txtcat question.txt

Et le legacy ?

What about legacy?

C'est génial sur le greenfield, mais comment tu fais sur des codebases legacy avec +1M de lignes de code ? 🙂

Great for greenfield, but what about legacy codebases with 1M+ lines of code? 🙂

Daniel Jarjoura

Ma convictionMy conviction

Sur du legacy à fort drift, patcher à la marge est un piège. Les LLMs peuvent ingérer massivement le contexte existant. Autant s'en servir pour repartir propre.

On high-drift legacy code, incremental patching is a trap. LLMs can ingest massive context at once. Use that to start clean.

01

Retro-doc d'abordRetro-doc first

L'agent lit l'intégralité des repos et produit ce qui n'a jamais été écrit : architecture réelle, invariants implicites, dette documentée, dépendances cachées. En quelques heures.

The agent reads all repos and produces what was never written: actual architecture, implicit invariants, documented debt, hidden dependencies. In hours.

$ claude "document the real

architecture of these repos.

Be brutal about the debt."

02

PRD de migrationMigration PRD

À partir de la retro-doc : PRD de refonte aligné sur les standards actuels, réduction de dette, conformité by design. L'IA ne patche pas, elle spécifie la cible.

From the retro-doc: a rewrite PRD aligned to current standards, debt reduction, compliance by design. The AI doesn't patch. It specifies the target.

INPUT : retro-doc

+ normative delta

OUTPUT : greenfield PRD

03

Nouvelle base, savoir héritéNew codebase, inherited knowledge

On repart propre, avec tout le savoir métier capturé. L'IA porte la mémoire du legacy sans en hériter les cicatrices. Le drift est liquidé.

Start clean, with all domain knowledge captured. The AI carries legacy memory without inheriting its scars. Drift is eliminated.

git init new-sovereign-stack

# legacy knowledge → CLAUDE.md

# legacy code → /dev/null

en courswork in progress

Cas réel · Dataspace souverainReal case · Sovereign Dataspace

Eclipse EDC / Connector

multi-repos

microservices legacylegacy microservices

conformité réglementaireregulatory compliance

Retro-doc en cours sur plusieurs microservices Eclipse EDC. PRD de refonte en préparation. Nouvelle base cible : souveraine, conforme, testée. Le drift architectural amplifié, c'est exactement ce qu'on évite en repartant propre.

Retro-doc in progress across several Eclipse EDC microservices. Rewrite PRD in preparation. Target stack: sovereign, compliant, tested. Amplified architectural drift is exactly what we're avoiding by starting clean.

Retex complet → Q2 2026 · Ce slide sera mis à jour.

Full retex → Q2 2026 · This slide will be updated.

Le legacy n'est pas un obstacle à l'IA.

C'est la matière première d'une migration qu'on n'aurait jamais osé lancer avant.

Legacy isn't an obstacle to AI.

It's the raw material for a migration you'd never have dared to launch before.

diff claude-code.log open-code-sovereign.log

Two pipelines. One conviction.

Open Code · Infra souveraine

OpenRouter + LibreChat stack

Open models: Qwen, Mistral, GLM, Kimi

Full confidentiality compliance

LINAGORA-hosted and auditable

Performance gap closing fast

The trajectory of 2026

Claude Code / Anthropic

Best reasoning quality today

Native context engineering via CLAUDE.md

Complex multi-file autonomous edits

Vision: screenshot to visual bug diagnosed

US infrastructure

Data sovereignty concerns

I use both depending on the project. And I observe that the gap narrows every month.

tail -n 6 lessons-learned.log

Objective and measured learnings.

| Finding |

Before |

After |

| Time-to-orbit of a new project |

3 months3 mois |

3 days |

| Output quality driver |

Model skill |

80% context quality |

| Dominant error type |

Syntax bugs |

Architectural drift |

| Required skill shift |

Writing code |

Specifying intent clearly |

| Context engineering learning curve |

— |

Real but short |

| Bottleneck moved to |

Execution |

Specification and (manual) tests |

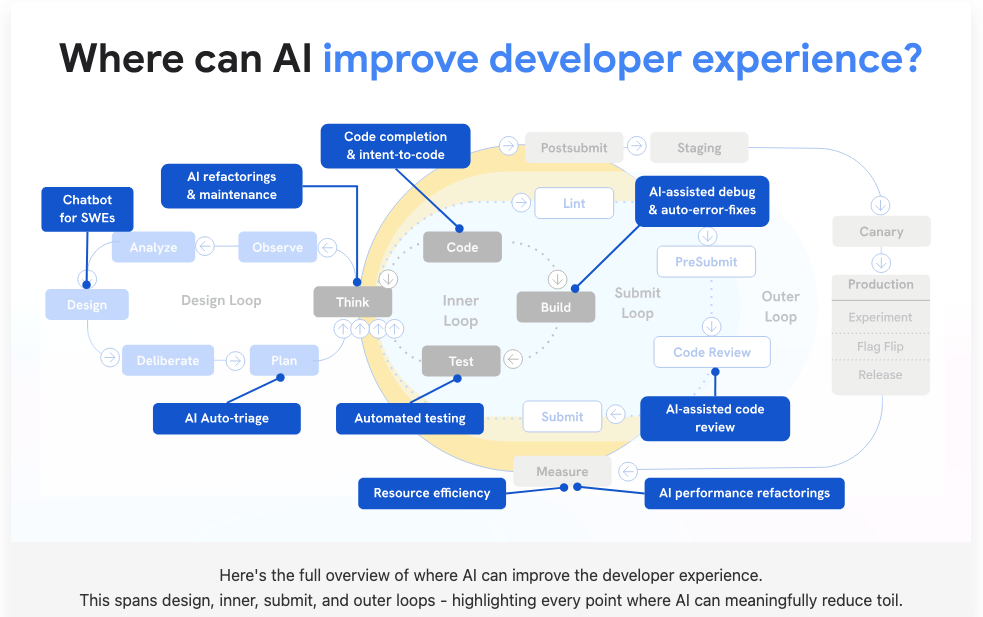

cat ai-dev-loops.md

Where can AI improve developer experience?

AI intervenes at every stage of the development cycle: design, inner loop, submit, and outer loop. Each loop is an opportunity to reduce friction and automate toil.

cat error-log.md

The 70% wall. Everyone hits it.

AI gets you to 70% of a working app fast. Then the last 30% becomes exponentially harder. Unless you have the right method.

Two steps back

You fix one bug, the AI introduces two more. Each fix cascades. Without architectural constraints, the agent has no guardrails and drifts with each iteration.

Architectural drift

The code works but diverges silently from your intended design. Three files later, your architecture is unrecognizable. This is the dominant error type with AI code.

Security blind spots

"Vibe coding is fun until you start leaking database credentials in the client." No input validation, secrets in frontend, SQL injection. The AI doesn't think like an attacker.

The answer

Context engineering is the antidote to the 70% wall. CLAUDE.md + PRD + explicit prohibitions = the AI knows what it must not break. The 30% becomes tractable.

echo 'unresolved' > questions.txt

Open questions. My own.

?

Augmented teams

In a team of 10 devs: who prompts, who reviews, who architects? The roles have not been reinvented yet.

?

Maintainability

Is generated code maintainable in 2 years by someone without the original context? What about turnover?

?

Complexity ceiling

Current projects are a few thousand lines. What happens at 100k? With 3 years of technical debt?

?

Process

Gitflow, pair-programming with AI, code review of generated code: our processes must evolve.

?

Security by default

AI does not think like an attacker. Input validation, secrets management, injection prevention: none of this is built into its reasoning. Who takes responsibility for securing generated code?

diff role-2024.yml role-2026.yml

The role shifts.

What I observed in my own practice. Not theory. Lived experience.

BEFORE → AFTER

Writing code → Piloting context

I no longer write implementation. I write CLAUDE.md, PRDs, and constraints. The code is a byproduct of well-specified intent. My value is in what I prevent, not what I type.

BEFORE → AFTER

Implementation → Intent

I describe the business outcome I need, not the technical steps. The AI bridges intent to code. The skill is in expressing what you want clearly enough that the machine gets it right the first time.

BEFORE → AFTER

Solo debugging → AI pair-programming

The agent is my permanent pair. It reads the codebase, proposes fixes, runs tests, and learns from failures. I review diffs and validate architecture. The loop is faster than any human pair I've had.

BEFORE → AFTER

Reactive fixes → Proactive architecture

The AI catches drift before it compounds. With the right context, it flags security issues, suggests tests, and anticipates edge cases. I spend more time thinking ahead, less time patching.

The developer of tomorrow is not a faster coder.

They are a better specifier, reviewer, and architect.

git log HEAD..future/main

The gap is closing.

12–18 months.

| Model / Stack |

Origin |

Strength |

Sovereignty |

| Qwen 3.x | Alibaba / open weights | Best open coding model | ✓ Self-hostable |

| GLM-4 | Zhipu AI / open weights | Multilingual + code | ✓ Self-hostable |

| Kimi k2 | Moonshot AI / open weights | Long context + reasoning | ✓ Self-hostable |

| Mistral | Mistral AI / European | Good, improving fast | ✓ Self-hostable, EU, auditable |

| Claude Code | Anthropic / US | Reference today | ✗ US infra |

The question is no longer AI or no AI.

It is: which infrastructure? Which governance?

The developer of tomorrow is a context engineer.

cat pipeline-2027.yml

The sovereign pipeline. 2027.

What the development workflow looks like when open models close the gap. Sovereignty built in, not bolted on.

01

Intent

CEO, PM, or tech lead describes the business outcome in natural language. No code, no tech spec. Pure product intent. The AI understands context from CLAUDE.md.

02

Structured PRD

The agent generates a PRD from intent + project context. Features, constraints, acceptance criteria, edge cases. Human validates and adjusts. This is context engineering.

03

Sovereign agent execution

Self-hosted open model (Qwen, Mistral, Kimi) executes autonomously: writes code, runs tests, fixes failures. No data leaves your infrastructure. Full auditability, EU-hosted, GDPR-compliant.

KEY SHIFT

04

Intelligent review

Automated security audit, performance analysis, architecture conformance check. A second AI agent reviews the first. Human validates the final diff.

05

Automated CI/CD deploy

Pipeline runs tests, security scans, builds, and deploys. The agent monitors production and self-heals. End-to-end sovereign.

cat mcp-config.json

MCP. The USB-C of AI tooling.

Model Context Protocol, an open standard that lets AI agents connect to any external tool through a single interface. The missing integration layer.

What MCP enables

One protocol to connect agents to any service

Database access, API calls, file systems, browsers

Playwright MCP: automated browser testing from AI

Real-time context retrieval during agent sessions

Standardized, composable, open source

Sovereign vision

Self-hosted MCP servers as enterprise infrastructure

Agent ↔ internal tools without cloud dependency

Browserlet uses this pattern: AI generates, execution is deterministic

The bridge between sovereign AI and your existing stack

$ claude "test this PR in Chrome and Firefox"

● MCP → Playwright launching browsers...

● Chrome: ✓ 24 tests passed

● Firefox: ✗ 1 layout regression detected

● Generating fix... ✓ committed

git show HEAD:last-commit.txt

I stopped coding.

I changed my abstraction layer_

December 2025 was not my last line.

It was my last line written by hand.

cat roadmap.md

Start now. Three horizons.

The models you use today are the worst they will ever be. Here is how to prepare.

TODAY

First contact

1. Install Claude Code or Gemini CLI

2. Write your first CLAUDE.md on an existing project

3. Build one small tool you actually need

4. Test relentlessly after each change

30 DAYS

Production MVP

1. Ship a real project using AI across the full lifecycle

2. Write structured PRDs as agent input

3. Develop your personal prompting system

4. Set up quality gates: security, tests, review

5. Adopt a framework (BMAD, GSD, Apex, Superpowers) and adapt it to your team

90 DAYS

Team scale + sovereignty

1. Deploy sovereign stack: self-hosted open models

2. Multi-agent orchestration across the team

3. Context engineering as team practice (shared CLAUDE.md)

4. Measure: time-to-orbit, defect rate, developer satisfaction

cat OFFER.md

Now: your turn.

We are building an acceleration offer for dev teams who want to make this shift, with sovereignty by design and not as an afterthought.

We are looking for partners to co-build it with us.

If you want to go from 3 months to 3 days in your own team, let us talk.

All projects are open source and publicly available on GitHub.

cat glossary.md

Glossary.

Context Engineering

The practice of building a structured work environment for an AI agent: architecture decisions, conventions, prohibitions, vocabulary, and project memory. Not prompting. Engineering the context in which the AI operates.

CLAUDE.md

A project-root file that Claude Code reads before every session. Contains architecture decisions, coding conventions, explicit prohibitions, and domain vocabulary. The persistent memory of the AI within your project.

Meta-prompting

Instructing the AI on how to work, not just what to do. Defining its reasoning approach, review process, error handling, and behavioral constraints before it starts coding.

PRD

Product Requirements Document. A structured specification that serves as operational brief for the coding agent: features, constraints, acceptance criteria, edge cases. Replaces the verbal back-and-forth of vibe coding.

Vibe Coding

Development by conversation: describe what you want in natural language, iterate via chat, copy-paste and adjust. A real productivity gain, but still manual driving. You translate intent one exchange at a time.

Coding Agent

An AI that executes autonomously inside your project: reads files, writes code, runs tests, fixes failures, commits. Not a chat assistant, a collaborator with full project access. Claude Code is the reference implementation.

Architectural Drift

The dominant error type with AI-generated code. The AI produces working code that gradually diverges from the intended architecture. Context engineering is the antidote: explicit constraints prevent drift before it starts.

Sovereign AI

AI infrastructure hosted on self-controlled servers, using open-weight models (Qwen, Mistral, GLM, Kimi). Full data sovereignty, auditability, and no dependency on US cloud providers. The trajectory of 2026.

cat resources.md

Go further.

Curated resources to deepen your understanding of context engineering, agentic frameworks, and AI-assisted development.

Beyond Vibe Coding

Addy Osmani · Google

A comprehensive guide to AI-assisted development. Covers the full spectrum from vibe coding to production-ready engineering: prompt techniques, context engineering, CLI agents, multi-agent orchestration, and preparing for the future.

⎋ beyond.addy.ie

Agentic Skills Frameworks Compared

Ry Walker

A research comparison of 11 AI agent skills frameworks (BMAD, GSD, Apex, Superpowers, and others). Categorizes them into methodology enforcers, official skill catalogs, and orchestration platforms. Essential reading before choosing your framework.

⎋ rywalker.com/research/agentic-skills-frameworks